Embracing Generative AI in the workplace

How to responsibly enable the use of generative AI tools at work with the Island Enterprise Browser

2023 is the year that generative AI reached mainstream awareness. ChatGPT captured the world’s attention and imagination for what’s possible. It’s still the early days, but there’s no question that this technology presents a massive opportunity to boost productivity across a wide range of disciplines. Those organizations that embrace AI and experiment with ways to optimize key workflows will surely see positive returns. Many more vendors will enter the market with AI-enabled products while the hyperscale cloud providers continue to differentiate their platforms through integrated AI tools. We can’t see the future, but it’s clear that generative AI will play a transformative role over the next decade.

Of course, like all transformative technologies, there are well-founded concerns about governance and safe usage of generative AI tools in the workplace. A well-intentioned employee could inadvertently leak confidential or sensitive information when submitting an AI chat prompt or uploading an image file. This seemingly benign action can create an immediate data loss problem, but also a long-term one when that sensitive information becomes part of the dataset used to generate new responses. Recent news reports indicate this exact scenario played out at Samsung, where employees submitted highly sensitive source code to ChatGPT for debugging. Incidents like these expose two orders of risk: the first is the direct impact of inappropriate information handling and leaking sensitive data. The second, and arguably larger risk, is the opportunity cost to organizations who avoid AI tools entirely out of concern for data security. There’s so much positive potential for generative AI that organizations who close that door now may be left behind in the future. A recent paper published by NBER showed a 14% increase in productivity for call center workers assisted by generative AI — and that’s with today’s relatively immature AI product set. The future is bright for organizations who embrace the potential for AI and implement the necessary controls to use it safely.

Smart AI Governance

When considering how to implement smart AI governance in the workplace, start with these four categories:

- User Education and Awareness

Data security when using AI tools is grounded in the same policies and practices used when working with third-party agencies or vendors. User education and basic data protections go a long way in reducing the risk of unwanted data leakage. When a user starts interacting with AI tools, it’s a good opportunity to remind them about the information security policies that govern the interaction.

- Protecting AI Inputs

Adding interactive controls around the AI inputs, or prompts, is a smart way to avoid unwanted information disclosure. Users should get immediate feedback if they attempt to share sensitive data like payment records, social security numbers, or API keys. Some applications, like source code repositories, may be entirely off-limits and restrict any data being shared with an external AI tool. When done right, these controls can prevent inappropriate information leakage without degrading the user experience.

- Inspecting AI Outputs

Today’s generation of AI tools are always confident in their responses, even if those responses contain factual errors. A New York lawyer discovered how damaging this can be when he submitted a court filing including AI-generated citations — that did not exist in reality. Adding some boundaries around how the AI-generated output is used is a smart approach. This is especially true for AI-generated code, where a developer may be tempted to copy and paste whole blocks of code without careful analysis.

- Measuring Efficacy

The ultimate goal for AI usage in the workplace is to improve overall efficiency and employee productivity. As organizations develop their AI strategy, it’s smart to consider how to measure the results. This will differ greatly depending on the particular function where AI is being used, but it’s essential to help steer business leaders towards success.

AI And The Enterprise Browser

Island, the Enterprise Browser, is the ideal platform to safely use generative AI tools without compromising on data security or leakage. Whether your organization is just getting started with AI and experimenting with different services, or if you’ve identified a preferred AI tech stack and you want to maximize its value, Island offers several key capabilities to benefit IT, Security, and the end-users directly.

Application visibility offers a full accounting of all the web applications and extensions used throughout the organization. This is useful for identifying users or groups who are early adopters and make good candidates for testing AI tools and policies before widespread adoption. Visibility extends to application usage, including the ability to audit all interactions with AI tools to analyze user-generated prompts. All analytics data collected by Island can be shared with your SIEM or data aggregation platform of choice.

Gracefully redirect users to the AI tools your organization prefers, and prevent the use of unsafe alternatives. If a user attempts to use an unwanted AI application or install an unsanctioned AI browser extension, Island can block access and redirect to the sanctioned platform, including the native built-in Island AI Assistant. Browser extensions are fully managed within Island, so you can allow for experimentation, while controlling which applications those extensions can be used with. Or, you can automatically install the preferred extensions while blocking others. Many vendors are offering AI-powered extensions so this is an important area to implement smart governance.

End-user awareness and education is improved through dynamic in-browser messaging. If a user attempts to paste sensitive data they will see a clear message explaining why the action was prevented and where they can learn more about company data policies. When a user navigates to an AI tool like ChatGPT, they will see a message reminding them about the company's privacy and security policies and the acceptable-use policy for generative AI tools. Showing this type of information in context, at the moment it’s relevant, makes it more effective than alternatives like a company-wide email message.

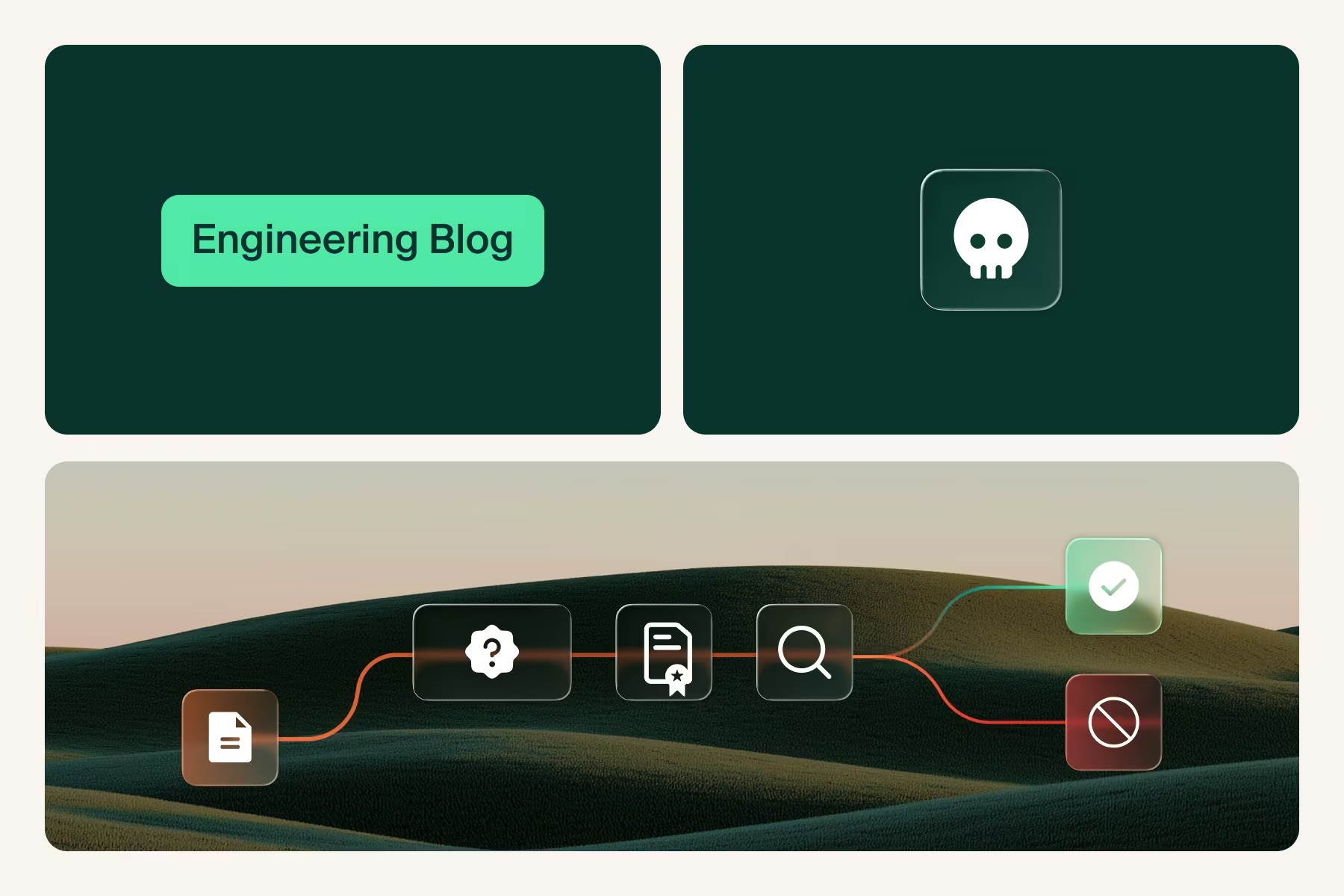

Scan AI-generated code output to govern how it’s used. Generative AI tools will often generate code snippets that are functional but include serious flaws that should never make their way into a production environment. Island can scan code blocks when a user attempts to copy and provide immediate feedback. This approach balances the benefit for developers getting code suggestions from AI, while ensuring that they don’t uncritically accept the generated code and paste it into a production codebase.

Application boundaries provide an intuitive way to keep sensitive data within certain applications, and the corporate tenant of those applications, from being moved or shared to untrusted destinations. As an example, customer support staff can move customer records freely between the corporate tenants of Salesforce.com, Slack, and Microsoft365 but they can’t be pasted into the ChatGPT prompt window. This same boundary applies to browser extensions, which can be automatically disabled when accessing critical applications.

Contextual DLP controls offer further granularity to prevent certain types of data, like credit cards or social security numbers, from being shared with an AI tool — regardless of where they originated. If these data types are detected, the user sees a clear message explaining why their action was blocked and a reminder about using sensitive data with AI tools. This control mechanism allows for use of AI tools while preventing sensitive data getting added to a prompt. Island offers a built-in DLP engine and can integrate with external providers to leverage existing rules and classifications.

Flexible deployment options for AI tools optimized the user experience. With Island, AI web applications can be deployed as browser extensions, added as a link to the homepage, or brought out of the browser and deployed as a standalone app on the desktop. Regardless of which deployment method users prefer, all the data controls, governance, and auditing visibility are the same. For organizations that choose to standardize on a particular AI vendor, users can see a gentle reminder or a redirect to the appropriate corporate standard AI resources when they attempt to access other AI tools — or they can be blocked entirely. And for users who are new to generative AI tools, Island offers the ideal onramp with a built-in AI Assistant that’s immediately available in a side panel within the browser. Across all deployment models, Island gives you unmatched visibility, audit logging, and metrics to refine policies and measure efficacy.

Looking Ahead

We don’t know exactly what the long term impact of widespread generative AI usage will be — the full potential of disruptive technologies are only understood in hindsight. It’s a safe prediction to say that AI will massively transform the way we work, and bring a dramatic increase in productivity. The risks to data security are real, but they’re overshadowed by the opportunity cost to organizations that avoid AI entirely. Across industries, the organizations that harness the power of AI for productivity and efficiency gains will see competitive advantage. The generative AI category is in the early stages, and there will surely be missteps and surprises along the way to full maturity. At this moment, there’s tremendous value in instrumenting the tools, policies, practices to safely navigate the coming AI revolution. This includes user awareness, application visibility, and governance for AI inputs and outputs.

Island, the Enterprise Browser, is the ideal platform to safely use generative AI in the workplace. Island delivers the complete visibility, governance, and DLP controls that IT and Security teams need, along with a frictionless end-user experience that guides and informs users in using AI tools safely and efficiently. With Island, organizations can embrace innovation while safeguarding sensitive data.

.svg)

.svg)

.svg)