How software teams avoid death by hypergrowth

From the very start of Island, it was clear this wasn’t going to be an ordinary startup situation. One with a traditional POC, where you rush to make specific things and worry about everything else later.

We were building a really big product, and we knew we needed to do it right from the start and keep it right at scale, no matter how many engineers we onboard.

We knew the code itself was less important, as it would change frequently, but building a mechanism that would allow us to move fast while continuously onboarding people was critical. And because of that, we decided to invest in building the right infrastructure.

Here are the five main areas we focused on to successfully launch Island.

Create consistency by systemizing the development of new services

We wanted the code to look similar across the system, so we made a skeleton of how a microservice looks, how an extension “service” looks, and made sure they were similar to each other. This made diving into any code and things like code reviews much simpler. It also enabled us to get into someone else’s code very quickly, easily, and confidently, since all of the code looked similar. Naturally, we didn’t want to create a big boilerplate for each service, so we wrapped it in generators and Jenkins jobs to create the code as easily as deploying it. Today, populating a new service takes less than a day and is mostly automated. Engineers focus on the business logic rather than how to install dependencies, or how the code structure should look.

And of course, in the specific cases where you’d want to go out of the template, it would be obvious to understand a discussion is needed around it

Ensure the highest standard of quality with ongoing tests, coverage, automation, & CR

We then understood that, while we wanted to move fast and release features in rapid phases, our releases had to be of the highest quality and remain that way. Maintaining this high-quality standard was extremely important because the browser is such a crucial tool for everyone – bugs could negatively impact our customer’s productivity – and because of that we kept progressing without letting ourselves get “stuck in the mud” with a lot of regression bugs.

First, we had a rule: No code goes unreviewed. Every piece of code must be reviewed by at least one person from the team. Every feature, every bug, every configuration file, even every typo fix. In specific cases we even added code owners to make sure only the code they approve makes it into the product.

Second, we added CI in the form of github actions to enforce specific style, coverage of tests, build and quality of the code (no warnings etc.), and while coverage does not always point to quality, it at least forced the engineers to think about what they wanted to test and how.

Here’s a nice graph on how our coverage looked after we started enforcing it. When we started back in Jan ‘21 we were at around 80%, eventually making it to 97%.

In addition, we invested heavily in automation from the beginning. This gave us the ability to test features E2E and and keep ourselves accountable for its stability and consistency, while always improving our ability to understand and debug issues on a given PR.

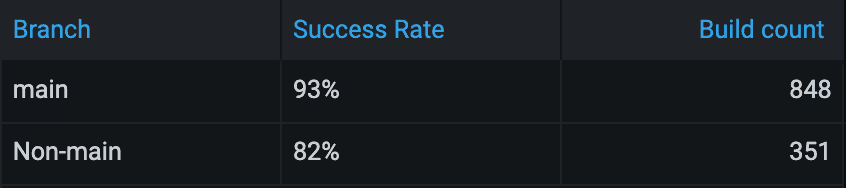

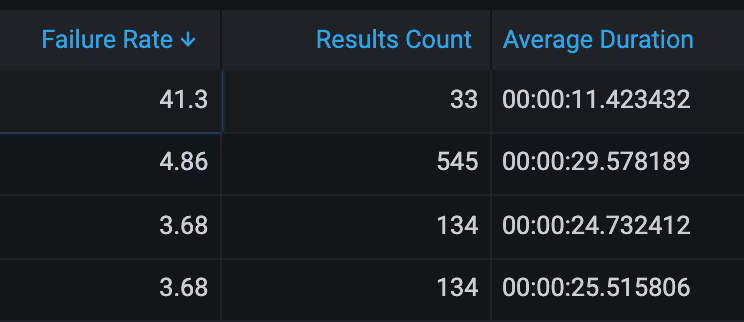

Here is an example of our Grafana presenting the automation success rate:

Another good example is our per-test fail ratio & build time, for monitoring which tests are giving value, which are not, and of course which are slowing us down:

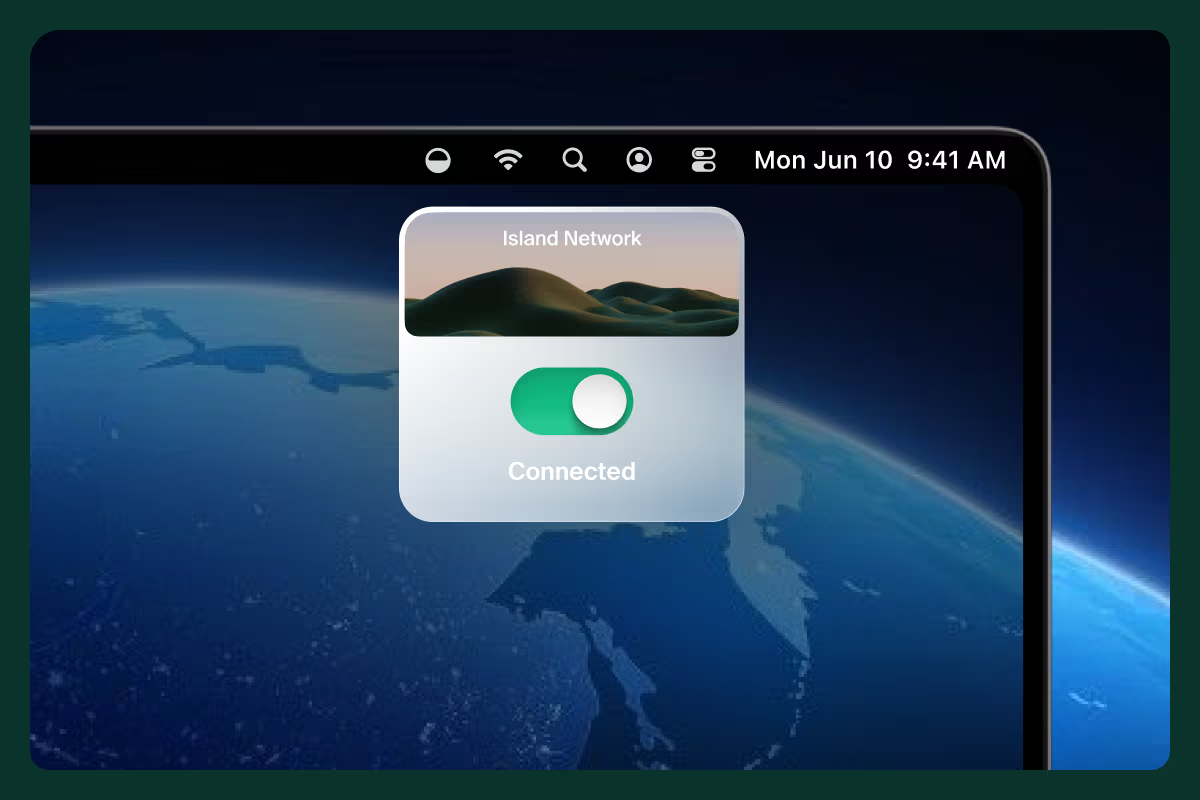

Make gradual deployment easy with feature flags

Deploy, deploy, deploy. Since we use our own browser on a daily basis, we wanted to make sure engineers are comfortably deploying multiple versions a day while maintaining a high level of quality. Instead of canary or blue-green deployments style, we wanted to make sure our deployment strategy fits the Island company strategy – lots of engineers moving at a very fast pace. We chose a strategy to keep deploying and keep testing in production while having granular control on how each feature behaved. For that, we chose to use feature flags. Each development, each feature can be controlled by a feature flag (not only boolean, but every variation of a parameter) and each feature is gradually deployed among customers as well.

First we deploy features to our own browsers, then we deploy them to our demo segment and sales engineering. Afterwards we deploy them to specific customers (beta customers, early stage adopters) and finally to all customers. This strategy allowed us to control the quality of releases while getting continuous feedback from both internal and external users and our internal tools and metrics. In addition, it allowed our product managers to have granular control over the product and its deployment, and to decide how and when they wanted to present it to the customer instead of having to depend on engineering. Of course, we’ve added granular control flags in both unit-tests and E2E automation in order to ensure the system is working in all kinds of variations.

I suggest this strategy to any early stage startup wanting to move fast while maintaining high quality.

Invest in onboarding as much as your code

As we planned on continuously onboarding engineers, we made sure everything was well documented so a clear plan was presented to each engineer as they joined. Of course, like most companies, we assigned each new engineer a ‘buddy’ to guide them along the onboarding process. Our initial goal with onboarding was to have an engineer fully ramped up with all of our tech and be ready to insert code into the product by the time onboarding was finished. The engineer received a clear checklist of what needed to be done and what exercises were to be completed. There would be planned checkpoints between each technology, that included a sync with his/her assigned buddy where they’d show what they worked on. In addition, the engineer would add a module that improved the life of the day-to-day work of the developers, followed by a “kudos” shared with the whole company from his/her buddy to celebrate what the new team member achieved in such a short time.

What’s important to understand is that onboarding is not a static, one-time, “check the box” creation. It’s an ongoing process.

Every new employee joining the Island team improves and fixes the onboarding flow as we go, in case something is wrong or no longer relevant. In addition, we do a retrospective with the engineer and his/her buddy, and see what other items we need to add to the onboarding. For example, our onboarding originally focused only on technology. But over time, we added architecture sessions, product functionality overview, automation and other areas.

Create visibility into your production environment via monitoring & alerting

Finally, you can’t keep the flow without visibility and alerting. We added logs everywhere and set up alerts to dedicated slack channels on every error. We assigned a “developer on duty” to continuously investigate bugs and improve our monitoring infrastructure. We added dashboards to ensure quality as well as user experience, which measured latency of calls, time of browser events as well as error rate and uptime of production cloud services, all alerting into Jira.

Summary

It’s important to make a conscious effort to recognize when your organization is in scale mode. Every tiny decision is important, even the small stuff like how you organize a specific file in the development environment file system or taking the time for a regular retro with new employees.

Find a way to create an environment where quality is top priority but does not cause fear of deploying and merging. Enable the tools your engineers need to deploy their code comfortably while putting strict methods in place for having granular control over what is deployed and when, as well as ensuring overall quality. In addition, engaging product leadership to understand those decisions will create a healthy work environment while enabling the speed and velocity you need for early stage start-ups aiming to grow fast.

.svg)

%20(1).png)

.svg)

.svg)