Enable AI, Safely. How Island AI Protect Gives Security the Visibility to Say Yes.

Island AI Protect gives enterprises the visibility and control to safely enable AI everywhere with one unified platform.

AI is everywhere. Employees and contractors use it in consumer browsers, AI browsers, desktop apps, extensions, and terminals, from offices, at home, and on the road. Some are on managed corporate devices, others on their personal laptops, and many are accessing AI on personal accounts that IT has no visibility into, all connected through everything from corporate networks to coffee shop Wi-Fi.

We already know that AI is a game-changer for productivity and innovation. But it's also creating new kinds of risks. Most enterprises try to solve this by stitching together a patchwork of legacy tools and AI security point solutions, each with its own limitations.

DLPs can't see all browser-based activity. Traditional SASE tools force binary block-or-allow decisions. And new AI security vendors often force teams to rebuild policies from scratch. Before long, organizations are adding another layer, like a Zero Trust Network Access (ZTNA) product, just to secure AI access to internal apps.

Stitching 7+ tools just isn't working. It creates policy chaos and data gaps that expose your business. And with AI at play, these data gaps are more serious. A KPMG survey found that 48% of employees admit to uploading sensitive data into AI tools. That means customer lists, product roadmaps, and even protected health information could be flowing into AI models you don't control. The benefits of AI shouldn't come at the cost of data control or risk management.

Introducing Island AI Protect

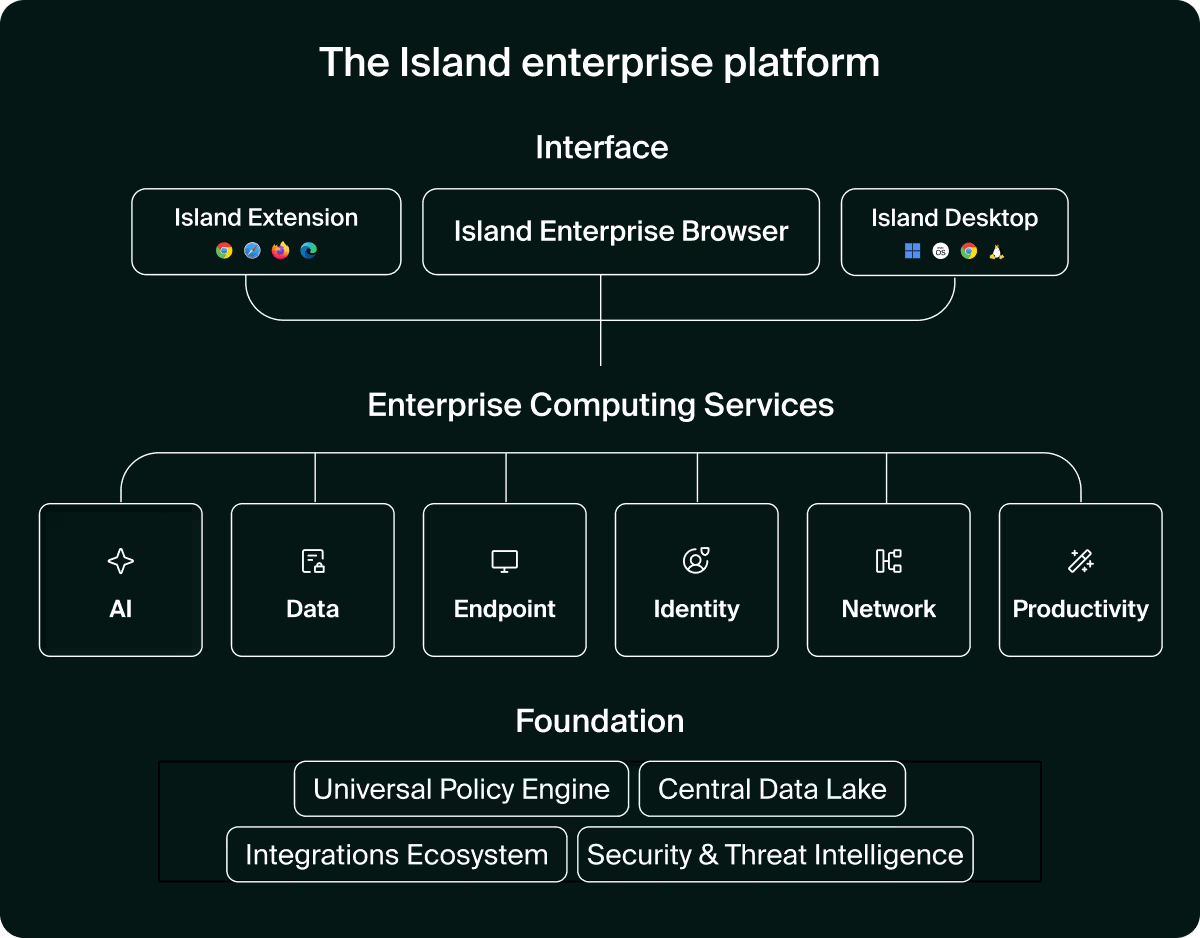

Now with full Island Enterprise Platform capabilities that go beyond the browser, covering every surface where AI runs.

Island AI Protect enables employees and contractors to safely use AI everywhere, without requiring security to juggle fragmented tools. The Island Enterprise Platform is powered by a single policy engine that protects every surface across web and desktop.

Watch the AI Protect 2.0 Product Announcement.

Island enables AI at work anywhere by running across your entire workspace with:

- The Island Enterprise Browser

- The Island Extension for other browsers

- Endpoint and network protection for desktop and internal apps

- API integrations for those using AI elsewhere

Island AI Protect takes the Enterprise Browser's built-in data protection, network security, access controls, and compliance capabilities even further with new controls and features created for how organizations actually use AI. These include ready-to-use dashboards, AI-native controls, in-browser user guidance, prompt & MCP calls, and device protection purpose-built for AI.

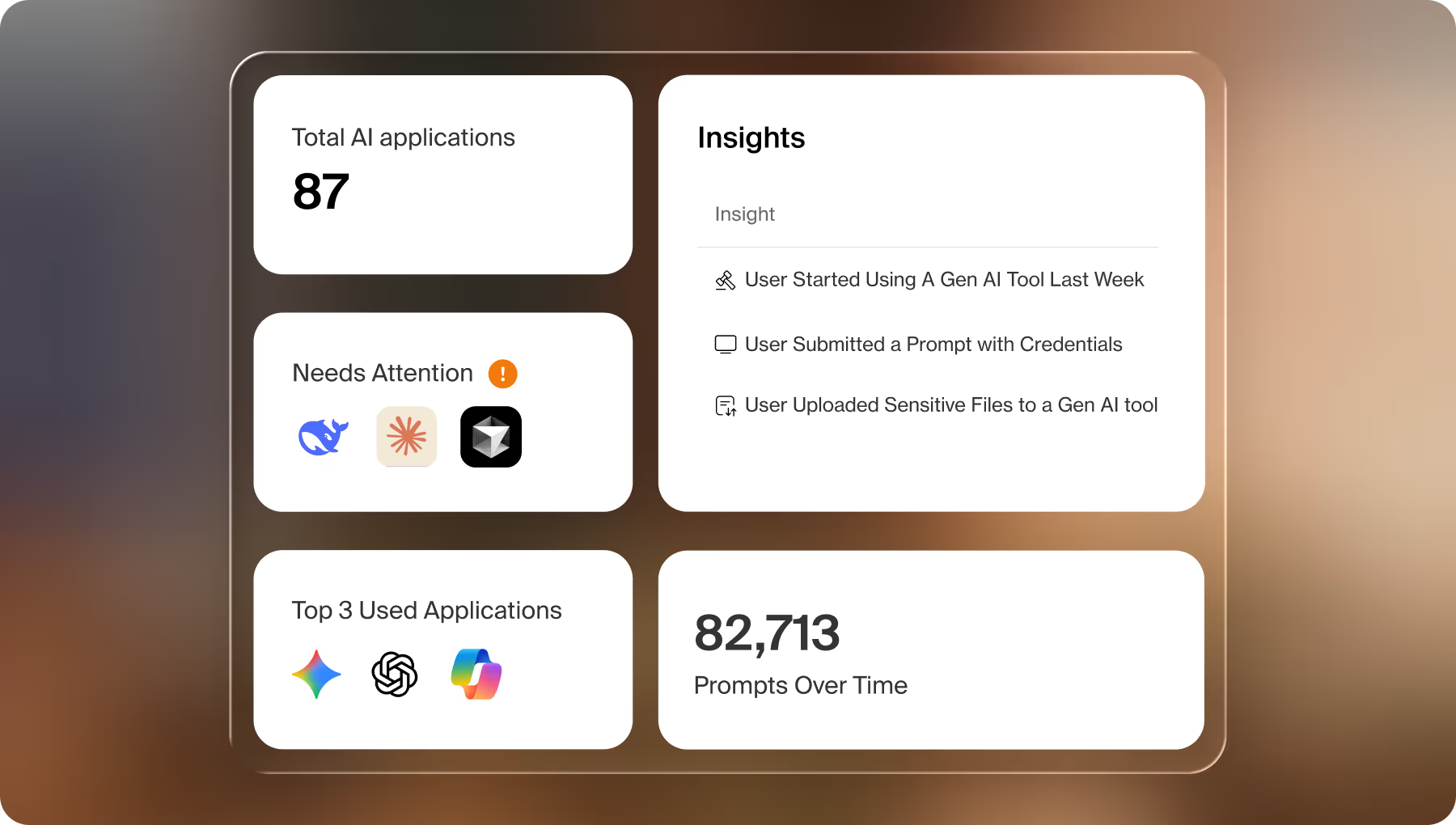

See exactly where AI lives and act on it

Before organizations can govern AI, they need to see all of it.

Island unifies visibility across browser and endpoint AI usage with dashboards and audit logs that give security and IT a real-time, organization-wide picture of AI activity. Organizations always know where AI lives, who is using it, what data it is accessing, and whether users are on corporate or personal tenants. Additionally, over 18,200 AI extensions are monitored with real-time risk scoring, so security teams know exactly what is running across their environment and what level of risk each one carries.

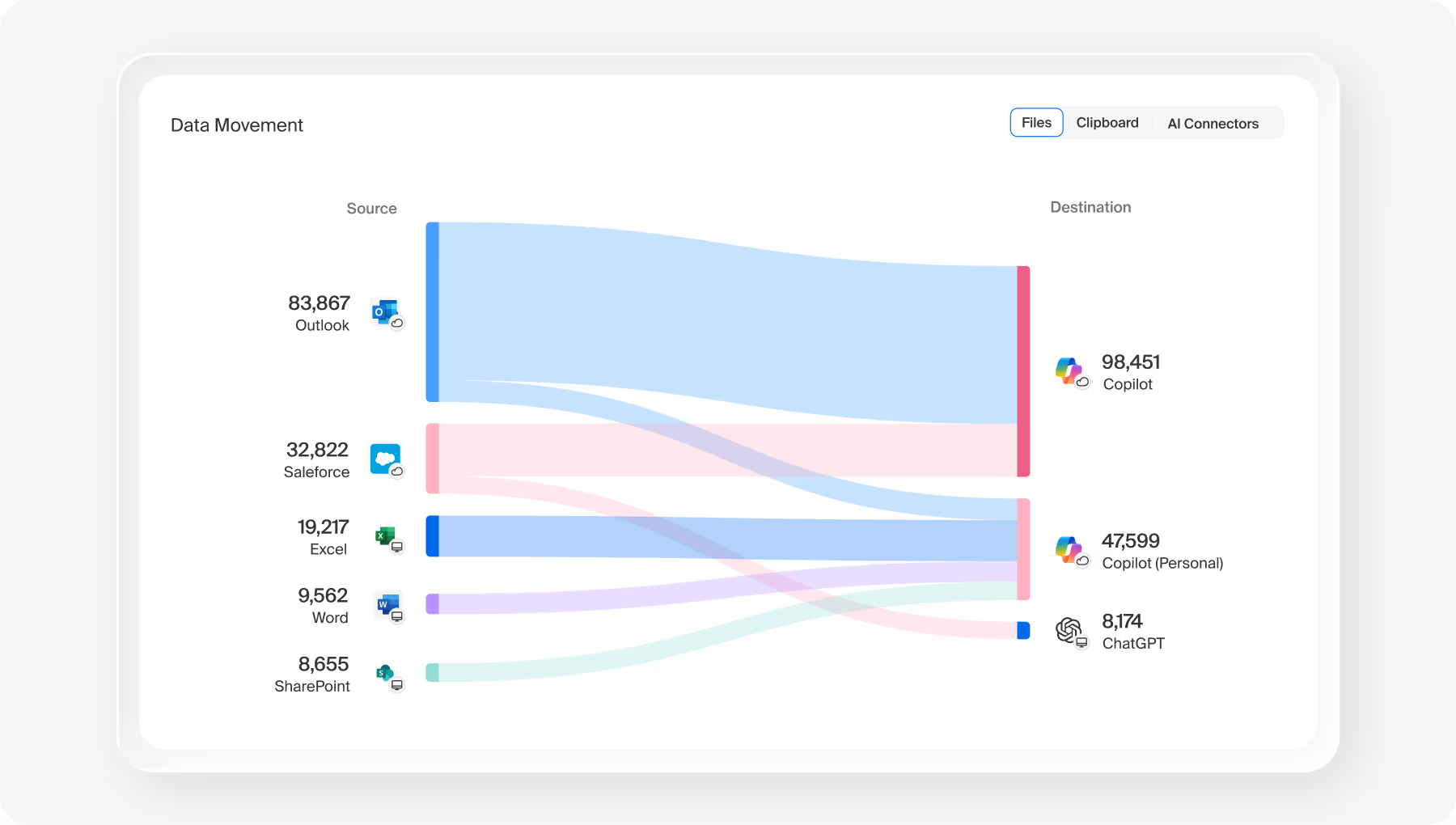

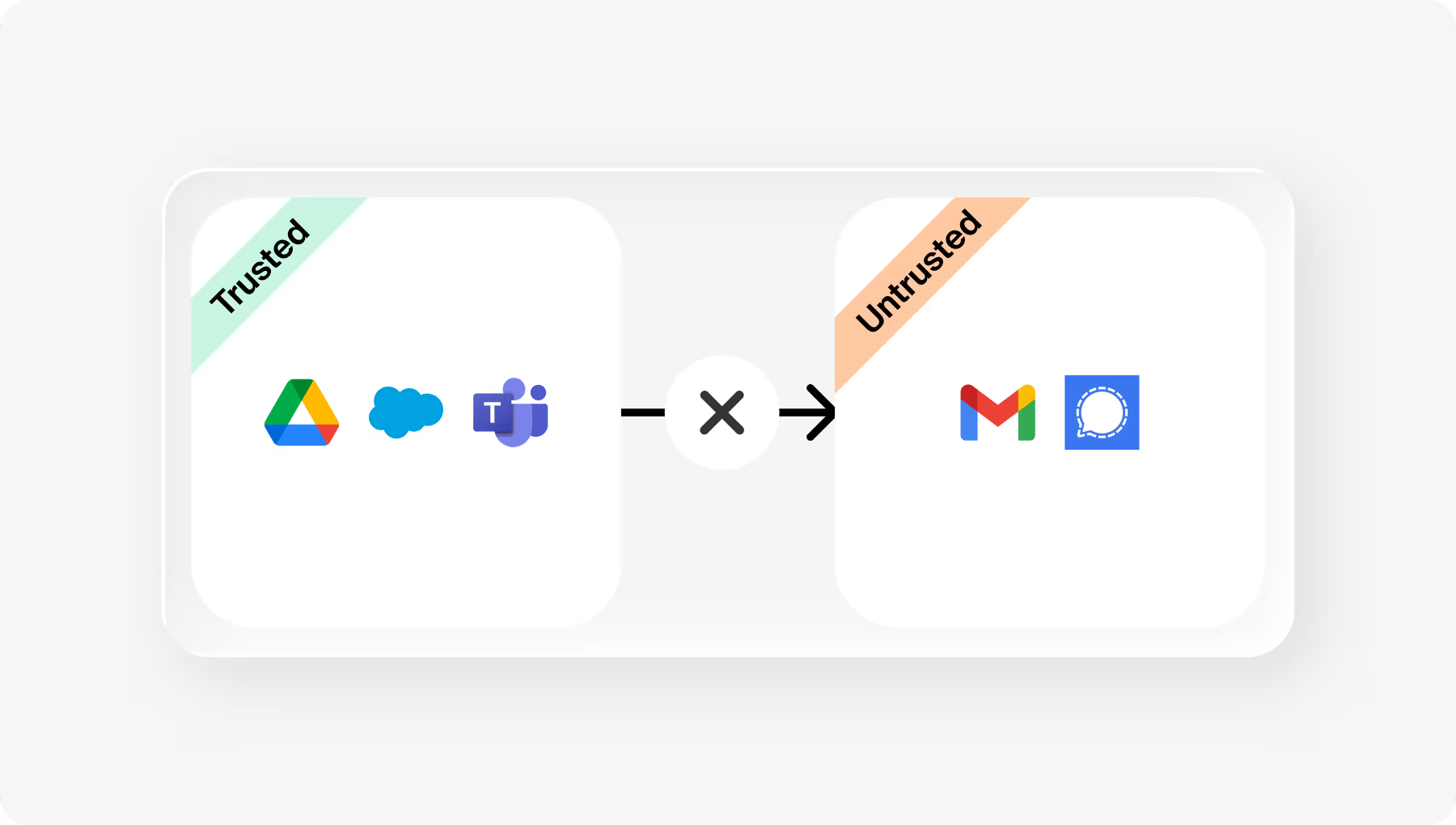

Data lineage tracks corporate information from source to destination across applications, desktop tools, and integrations, revealing both expected and unexpected AI usage patterns. That includes knowing which tenant data is moving to. When an employee copies data from a corporate tool and sends it to a personal ChatGPT account rather than the corporate one, Island sees it.

When data moves from a desktop app or an internal integration into an AI provider, Island sees that too. That level of granularity turns visibility into a business decision, identifying which AI apps are creating value and which are creating risk, and giving teams the confidence to say yes to more AI knowing they'll see exactly when to course-correct.

Extend visibility into MCP calls to your tools

Island extends that same visibility into MCP tool calls, so organizations see how AI providers connect to enterprise systems and move data between them. Teams know which integrations are active, who is using them, and when actions go beyond read access to modifying or transferring data. When data flows between tools like Slack and ChatGPT, Island sees exactly what company knowledge is being accessed or changed.

Prove compliance with deep audit logging

Island provides the deepest last-mile audit logging available for AI, including session recordings, screenshots, mouse clicks, and keystrokes. This level of detail gives security teams the evidence they need for regulatory compliance, with a complete record of every AI interaction across the organization.

Here's what that looks like in practice. A security team notices a user uploaded a file to ChatGPT containing sensitive material. Island surfaces the full conversation, including the prompts, the responses, and the file contents, captured directly at the last mile. No traffic inspection required and no blind spots in the record.

How TaskUs built an AI governance program that scales

TaskUs, a business process outsourcer (BPO) with over 60,000 employees, adopted a consistent three-step methodology for tackling AI governance: first gaining visibility into how employees were actually using AI, then making informed policy decisions based on that data, including sanctioning the tools people actually wanted to use, and finally enforcing controls through Island's platform. They started with gentle in-browser reminders to guide users toward approved tools, and later moved to automatic redirects as policies matured.

Policy updates rolled out across the entire organization in minutes, keeping enforcement unified, consistent, and seamless. Read the full TaskUs story

Beyond allow or block. Context-aware policies that match the real world.

Not every user, device, or scenario carries the same level of risk. Island AI Protect makes it possible to match policy to reality.

Instead of approving or blocking every AI tool outright, security and IT teams can create context-aware policies that define the right level of access for each situation. An employee on a managed device with strong posture might be able to access any AI tool freely. A contractor on an unmanaged device might only access one sanctioned application, with file uploads restricted entirely. And every scenario in between can be configured without writing a single new rule from scratch.

Users get the AI access they need while security maintains the control they require, without anyone making a binary call.

Here's another example. A user copies data from corporate Salesforce and tries to paste it into personal Gemini. Island stops it. They switch to the corporate Gemini tenant and Island recognizes it, letting the data flow freely. The user gets the freedom they need. The organization keeps the data where it belongs.

Three layers of data protection

Island safeguards sensitive data across three layers, so enterprise data never reaches an AI provider it shouldn't.

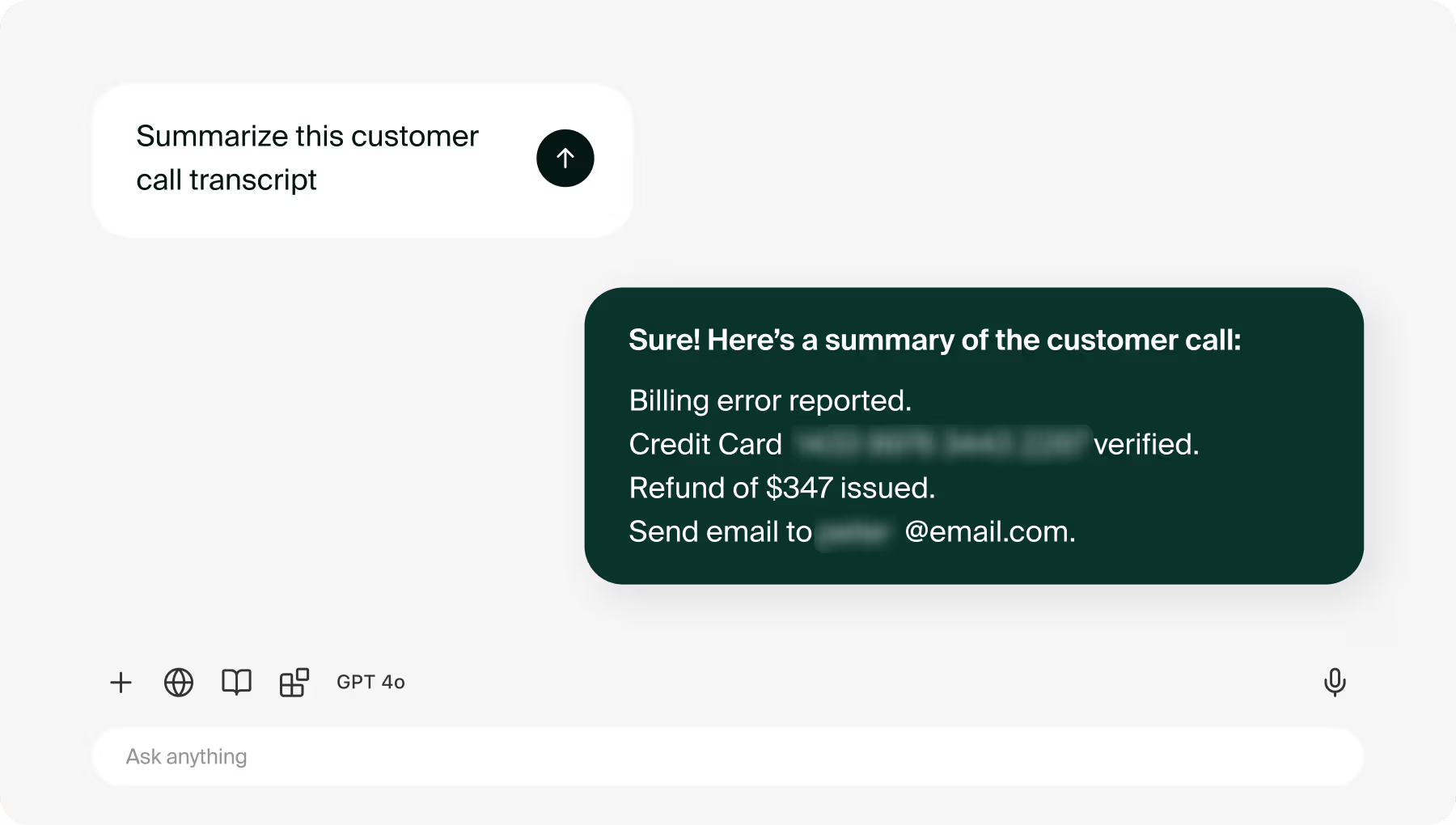

At the prompt level. Data protection begins before anything reaches the AI application. Island sanitizes prompts in real time, redacting sensitive information like customer data, payment details, or PII. Recently, private ChatGPT conversations surfaced in Google Search Console, the first leak of this kind, but not the first time information has escaped AI tools. With Island, employees stay productive and AI remains useful, because prompts are cleaned intelligently without breaking context.

At the data boundary. Corporate content only moves between approved corporate apps. If a contractor tries to upload a Salesforce report to a personal ChatGPT account, Island can automatically redirect them to the enterprise version instead, with an in-browser notification explaining why. Personal browsing stays private while corporate data stays within corporate boundaries. These boundaries work seamlessly across web and desktop environments, helping keep internal data where it belongs.

At the settings layer. Island enforces native AI controls that disable model training by default for top AI chatbots, even on free corporate accounts. These policies provide another layer of protection to help keep corporate data out of external models. Additional safeguards like limiting memory and blocking connections to custom Model Context Protocols keep AI tools powerful but contained.

More than a point solution

Island AI Protect is not another layer added to an already fragmented stack.

It is built into the Enterprise Platform that already governs access, security, and productivity across the organization. One policy engine covers the browser, desktop, extensions, and network simultaneously. One dashboard gives visibility across every entry point. And because Island owns the presentation layer where work actually happens, the protection is deeper than anything a point solution sitting outside the browser can deliver.

One platform means no patching together 7+ solutions, no fragmented coverage, and no blocking AI just to stay safe.This consolidation also delivers significant cost savings by eliminating the licensing and maintenance overhead of multiple security vendors.

Say yes to more AI

AI Protect was not built to restrict AI. It was built to make it safe to enable more of it.

When security and IT teams have complete visibility, layered data protection, and context-aware policy controls, the answer to new AI tools stops being "we need to review this" and starts being yes. Faster. More often. With confidence.

Any AI your team wants. Every control your enterprise needs.

With AI protection as the foundation, the next step is getting more value from the AI you already invested in. Read how Island AI Browser embeds any AI provider directly into the flow of work, made smarter by enterprise context.

Request a demo to see Island AI Protect in action.

.svg)

.avif)

.svg)

.svg)