Ready for Mythos: Island's AI-Assisted Security Methodology

A look inside Island's AI-assisted threat research, red teaming methodology, and why defenders who move first have a structural advantage.

.png)

Introduction

The landscape has changed. The response has to be structural, not reactive.

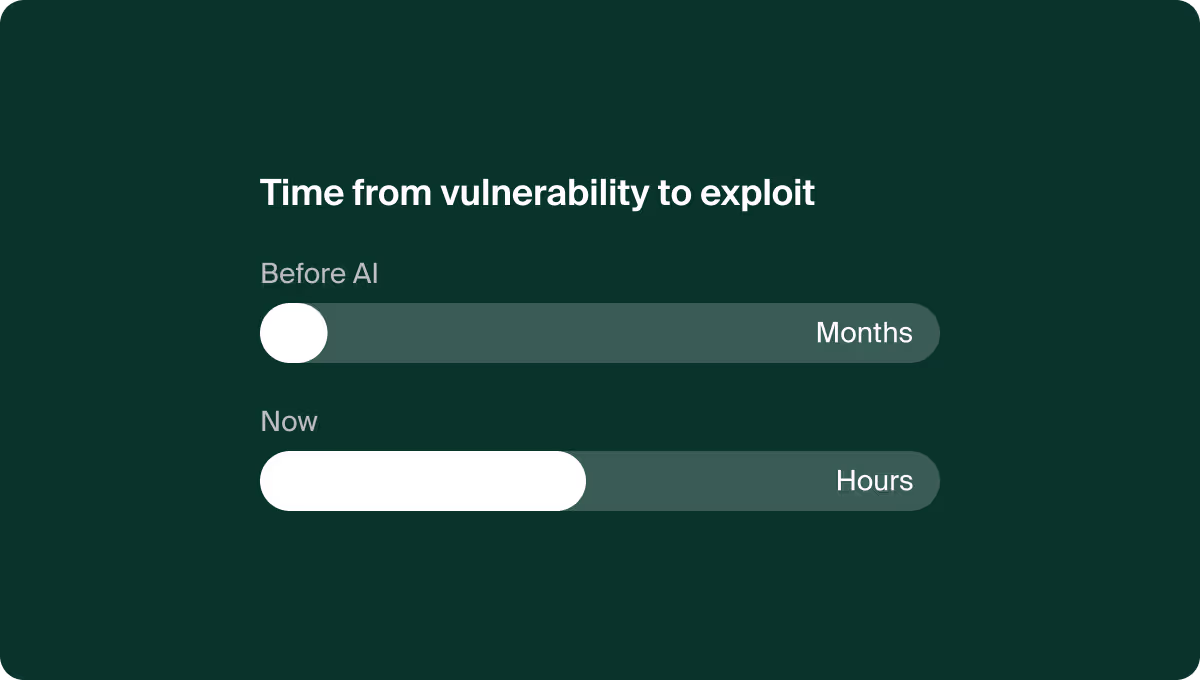

Over the past several years, AI has moved from a novelty in security workflows to a core part of how serious teams operate. That shift is now playing out in public: autonomous vulnerability-discovery capabilities are rewriting the economics of offense and defense in real time. The window between a flaw existing and a working exploit being weaponized has collapsed from months to hours. The standard of what constitutes reasonable defensive effort is being redrawn while most organizations are still reading the memo.

At Island, we have been building toward this moment for years. As a Chromium-based enterprise browser, we sit at the most exposed layer of the enterprise stack. The ecosystem we build on, and its vast dependency graph, is one of the richest and most scrutinized surfaces in software. We are simultaneously a consumer of a software ecosystem being stress-tested at unprecedented scale and a vendor whose product faces AI-accelerated scrutiny from researchers and adversaries alike.

This post describes, in technical terms, how we use AI in our own threat research and red teaming, what philosophy drives it, and why we believe the defender who moves first has real structural advantages in this new era.

Why the Island ecosystem is uniquely suited to AI-assisted research

Browsers are among the hardest targets in software. The Chromium codebase spans tens of millions of lines across renderer, IPC, extensions, network stack, sandbox, and enterprise policy surfaces. It integrates hundreds of upstream open-source components, each with its own release cadence and vulnerability timeline. It ships on a four-week stable channel, with security-only updates landing in days when needed.

The attack surface is vast, the release cadence is fast, and the dependency graph is deep. For a small security team, this is precisely the kind of problem where AI is not a luxury. It is the only way to operate at the scale the product demands. Manually reviewing every dependency delta across a release cycle is not tractable. Manually triaging every upstream advisory across hundreds of repos is not tractable. AI-assisted workflows turn these from intractable to routine.

The ecosystem benefits in two ways. First, every defender who invests in AI-assisted coverage closes gaps that adversaries would otherwise exploit. Second, the findings surfaced by AI-assisted review compound over time. Improvements upstream benefit every downstream consumer. It is a high-leverage place to apply AI capability, and the returns accrue to the whole community.

Our methodology: five workstreams, one philosophy

We operate AI-assisted security work across five standing workstreams. The pattern across all of them is consistent: agents for breadth and tirelessness, humans for judgment and prioritization. Neither side of that pairing is optional. Agents without human judgment produce noise at scale. Humans without agent leverage cannot reach the surface area the product actually demands.

1. Source-level review

Continuous agent-driven review of our own code and of the upstream components we depend on. We do not treat AI review as a replacement for human review. We treat it as a multiplier. An agent can read every PR, every dependency delta, every configuration change. A human cannot. The agent flags; the human adjudicates.

What we look for is targeted, not generic. Unsafe deserialization paths, privilege-boundary crossings in IPC, new uses of dangerous APIs, regressions in sandbox invariants, changes to code that touches authentication or secrets. The agent is given the context of our threat model, not left to guess what matters.

2. Faster patching pace

AI-driven remediation of vulnerable dependencies at scale, through internal dedicated tooling that latches onto our release cycle. The goal is to close the gap between a CVE being published and a fix landing in the tree, across hundreds of repos and ecosystems simultaneously.

This is where AI earns its keep most visibly. The dependency graph of a Chromium-based product is too large and too heterogeneous — Python, npm, Rust, Go, C++ vendored libraries — for manual remediation to keep up. Agents plan the update, run the tests, open the PR, surface the edge cases that need human attention, and move on.

Speed here is not vanity. It is the primary defensive lever in a world where time-to-exploit is measured in hours.

3. Supply chain and dependency triage

AI-assisted analysis of our dependency graph, advisory triage, and rapid blast-radius assessment during live supply chain events. When a new advisory drops or a compromised upstream package lands, the first question is always the same: do we use it, where, and what is the exposure? That question used to take hours of human investigation. Now it takes minutes, with the agent doing the graph traversal and the human doing the risk judgment.

Speed of triage, not just depth, is the deliverable. A six-hour head start on a supply chain compromise is the difference between a controlled response and a crisis.

4. Pipeline and cloud red teaming

Agent-assisted offensive research against our own build, deploy, and cloud control planes. CI/CD pipelines, OIDC trust relationships, IAM policies, cross-account role chains: these are the fastest-growing attack surface in modern software, and they do not show up in traditional pentests. We use AI to enumerate them, model the attack paths, and generate proofs-of-concept that turn abstract misconfigurations into concrete tickets.

The goal is not to produce a report. It is to produce remediation tickets and architectural lessons that change how we build.

5. Findings synthesis and ingestion

AI-assisted ingestion of vulnerability reports, scanner output, and internal findings into structured, actionable work. The space between a raw finding and a filed ticket, including deduplication, prioritization, owner assignment, linking to existing work, and extracting patterns across findings, is where most security programs lose information. AI-assisted workflows recover it.

The philosophy that ties these workstreams together is straightforward: we point AI capability inward, at our own estate, before anyone else does it at us. Every one of these workstreams has a dark-mirror version being built by adversaries. The defender who invests first runs a faster loop.

What we actually use

We are pragmatic and without brand loyalty. We evaluate each tool on whether it earns its keep against the attack surface it covers, and we rotate out what does not.

Our current stack includes tooling for agent-driven review and CI/CD integration, autonomous red team exercises, CSPM for cloud posture and blast-radius analysis, and an observability and automation framework for detection engineering and response. We also rely heavily on internally built tooling: a vulnerability report ingestion pipeline, exploitation proof-of-concept harnesses, and custom integrations where off-the-shelf coverage does not exist.

New autonomous vulnerability-discovery capabilities are exciting to us precisely because they plug into a methodology that is already running. The challenge is absorbing each new capability into workstreams without losing the human judgment that makes them effective.

Responding to the public conversation

The CSA "Mythos-Ready" framework published in April 2026 captures, in broad strokes, where the industry is heading. Our read is that it is directionally correct but still fundamentally reactive: patch faster, add agents, harden the basics. Useful as a baseline, but the real opportunity in this moment is not incremental.

Concrete moves we have made or committed to:

LLM-driven security review as a merge gate. Moving from ad-hoc agent use to a documented, enforced standard baked into our CI/CD pipeline.

Patch surge capacity planning. Our incident response framework now explicitly models concurrent, AI-driven disclosure storms rather than single-vendor events.

Risk metrics reframed around time-to-containment. The old likelihood-times-impact formula assumes human attacker cadence. It no longer holds.

AI agent adoption formalized. We are standardizing AI agent use across the security function, with governance and oversight commensurate with the access these agents have.

VulnOps as the long-term organizational model. A permanent vulnerability operations function, staffed and automated the way DevOps is, owning continuous discovery and remediation across our estate.

The defender's asymmetric advantage

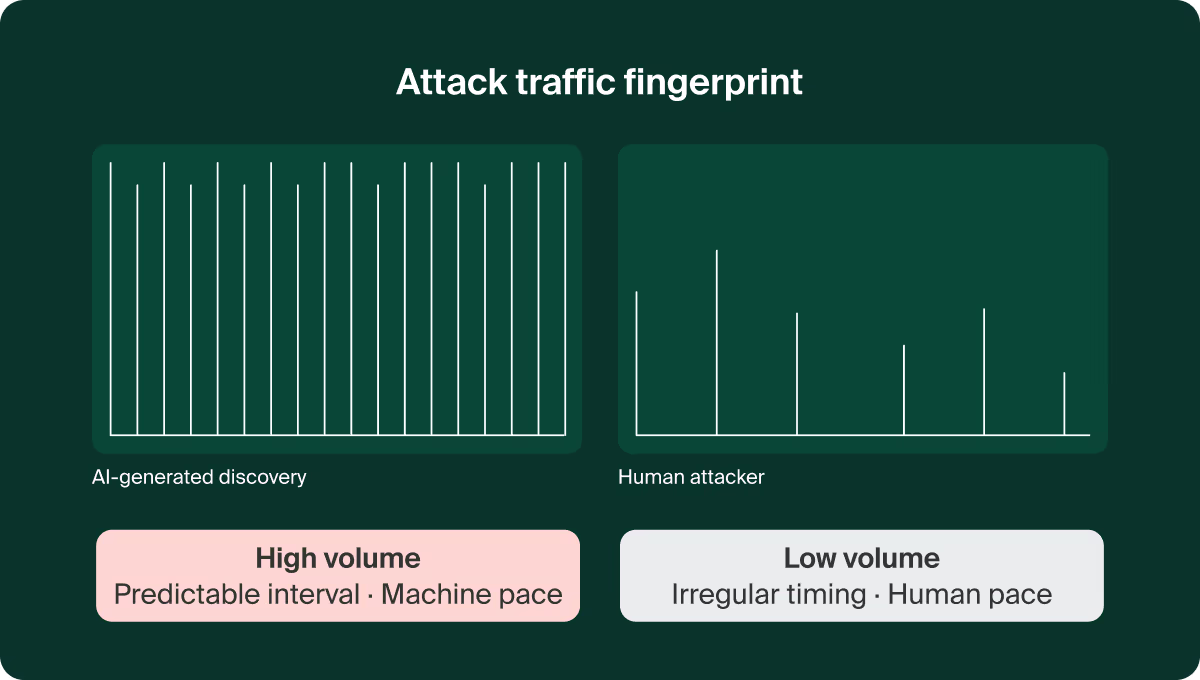

One perspective often missing from the public conversation: AI-assisted offense creates a behavioral fingerprint. High-volume autonomous discovery does not look like human attacker traffic. It has shape, cadence, and statistical properties that a human attacker would deliberately avoid. Defenders who learn to read that pattern gain a strategic advantage that simply does not exist when engaging human-only adversaries.

We will not detail our specific tactics publicly. This is live work and the value compounds with confidentiality. What we will say is this: the next generation of defensive engineering is not just "defend faster." It is "defend differently," exploiting the predictable patterns that high-volume autonomous discovery generates. This is one of the workstreams we are most optimistic about, and one we believe will distinguish mature defenders from those still running yesterday's playbook.

Best practices we believe in

Point AI inward first. Use it against your own code, your own pipelines, your own cloud, before anyone else does. The reconnaissance you do on yourself is the reconnaissance adversaries do not get to complete.

Make agent use boring and standard, not heroic. The moment AI-assisted review depends on a specific engineer's judgment to run, it has failed. Bake it into the merge gate, the release pipeline, the ticket intake. Make it the floor, not the ceiling.

Give agents the threat model, not just the code. An agent that knows what matters in your architecture produces signal. An agent that does not produces noise.

Keep humans on judgment, always. Prioritization, risk acceptance, disclosure decisions, and architectural calls belong to humans. Agents produce the inputs; humans produce the decisions.

Measure time-to-containment, not vulnerability counts. In a world where vulnerabilities are discovered at machine pace, the counting stat is obsolete. The question is how fast you close the window.

Budget for surge. AI-accelerated disclosure will produce concurrent multi-vendor patch storms. If your incident response framework assumes single-vendor events, it will break the first time one arrives.

Conclusion

At Island, the math has always favored the defender who moves first. AI accelerates both sides of that equation. We intend to be on the side that accelerates faster — for our own product, for our customers, and for the Chromium ecosystem we are part of.

The capabilities emerging from autonomous vulnerability research are not threats to be weathered. In defenders' hands, they make a kind of security posture possible that simply was not achievable before. We are leaning in, sharing what we learn, and building toward a future where the defender's advantages are structural, not accidental.

If you are working on similar problems in browser security, Chromium-derived products, AI-assisted defensive engineering, or adjacent spaces we would like to hear from you.

FAQs

Q: Why does Island focus on the browser as a security control point?

The browser is where work happens. It is where data moves, where SaaS applications are accessed, and increasingly where AI tools are used. Most security tools were not built to see inside a browser session, which makes the browser one of the least governed and most exploited layers in the enterprise stack.

Q: What makes Chromium-based products a particularly complex security target?

Chromium spans tens of millions of lines of code across multiple subsystems and integrates hundreds of upstream open-source components. Each component has its own release cadence and vulnerability timeline. Keeping pace with that surface area manually is not feasible, which is exactly why AI-assisted workflows are necessary rather than optional.

Q: How does Island use AI in its security research without introducing new risks?

We pair agents with human judgment at every step. Agents handle breadth — reviewing code, traversing dependency graphs, triaging advisories — while humans handle prioritization, risk acceptance, and architectural decisions. AI-assisted workflows are baked into standard processes rather than left to ad-hoc use, and agent access is governed in proportion to its scope.

Q: What is VulnOps and why does Island consider it the right long-term model?

VulnOps treats vulnerability discovery and remediation as a continuous operational function, staffed and automated the way DevOps is, rather than as a periodic audit or reactive response. In an environment where vulnerabilities can be discovered and weaponized in hours, a permanent, automated remediation capability is more appropriate than a sprint-based model.

Q: How does AI create an asymmetric advantage for defenders?

High-volume autonomous vulnerability discovery generates behavioral patterns that human attackers deliberately avoid. Defenders who learn to recognize those patterns gain detection and response advantages that do not exist against human-only adversaries. This is an active area of investment for Island's security team.

.svg)

.svg)

.svg)